AI Film Intro

Self-initiated experiment testing whether an AI-first filmmaking workflow can achieve consistent character detail and cinematic continuity

"AI filmmaking won't reward prompts. It will reward people who can turn creative direction into a system."

"Consistency is workflow-dependent, not tool-dependent. The differentiator isn't access to the newest model. It's pipeline design, iteration discipline, and taste."

A self-initiated experiment proving that AI filmmaking demands workflow design, not just tool access. By treating a deliberately difficult brief (character with fine-grain details) as a production system test, the project delivered coherent cinematic output and a documented pipeline model.

A self-initiated experiment to test whether an AI-first pipeline can produce a short film intro with real cinematic control (not just isolated good-looking frames). The goal was to stress-test character consistency, visual style continuity, environment coherence, and the practical limits of today's generative toolchain.

The result: a fully AI-produced intro film, a repeatable workflow model, and a clear answer. AI filmmaking won't reward prompts. It will reward people who can turn creative direction into a system.

AI video is advancing faster than anyone can track. A year and a half before this experiment, we were laughing at a deformed AI video of Will Smith eating spaghetti. Today, keeping up with model releases is nearly impossible. But speed of output isn't the same as quality of craft.

The real problem is continuity. Most AI-generated video still breaks where filmmaking actually matters. Characters drift between shots, micro-details mutate, visual language collapses, and there's no reliable way to maintain coherence across a sequence. Good-looking single frames are easy. A coherent narrative is not.

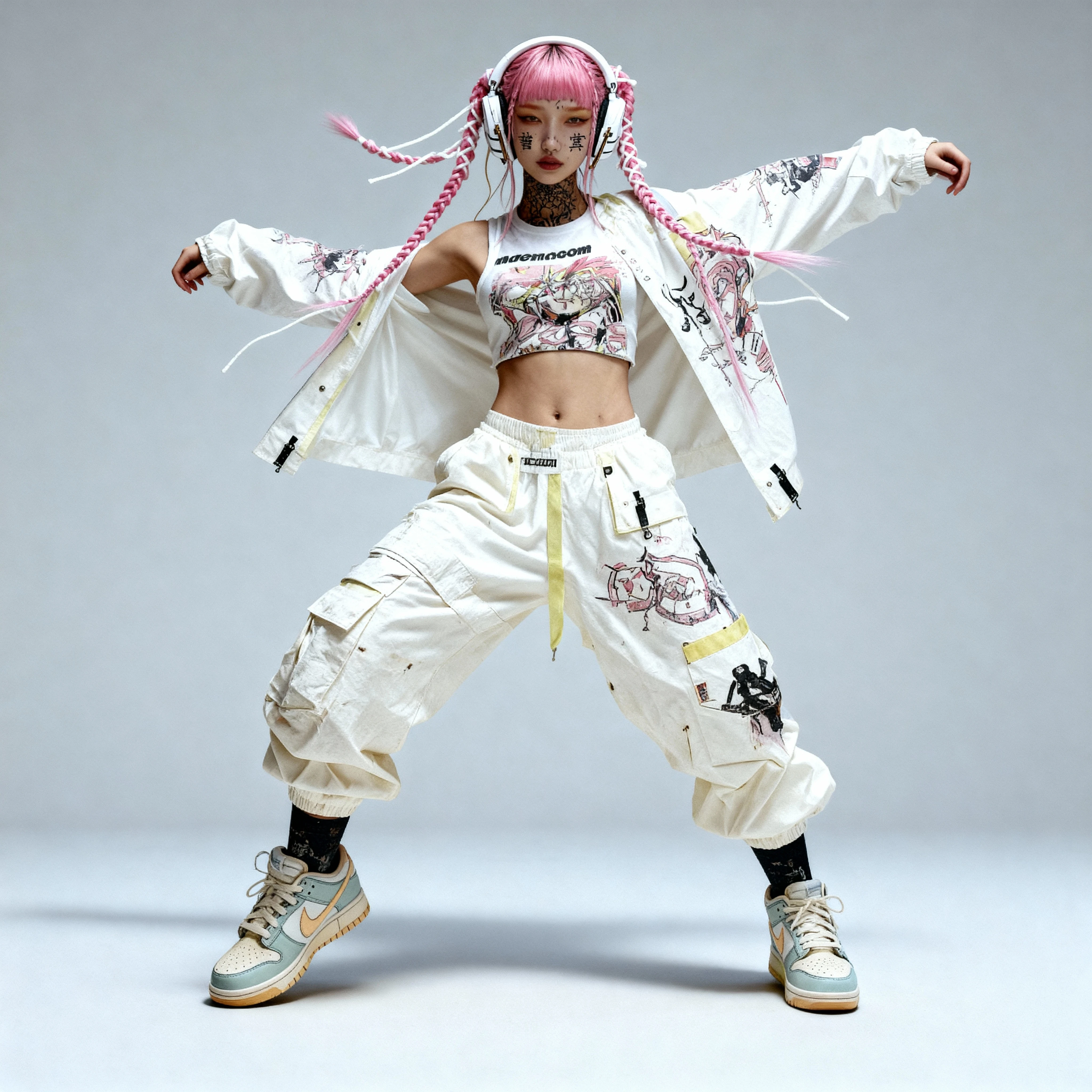

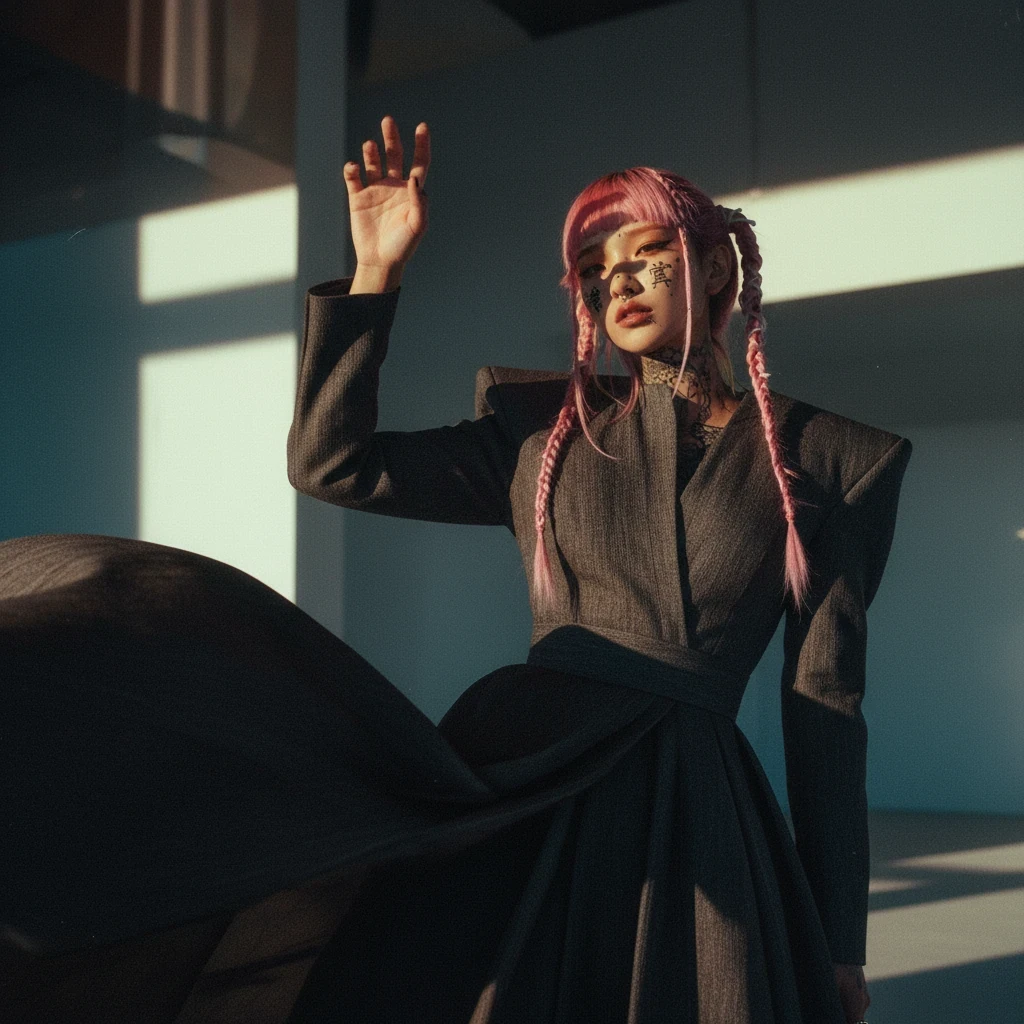

The test needed to be deliberately hard. I designed a character covered in kanji tattoos as a visual brief that punishes inconsistency. Small deviations are instantly visible. If the pipeline could hold fine-grain detail across multiple generations, it could hold anything.

Treat it like a production system test, not a creative experiment. The point wasn't to "make something cool with AI." It was to prove or disprove a pipeline. Can you build a repeatable workflow that gives a creative director actual control over AI-generated moving images?

Define consistency criteria before generating anything. Before a single frame was produced, I established what must stay fixed: character details, tattoo placement, colour palette, lighting logic. And what could vary: camera angle, environment, atmospheric mood. This constraint framework is what made coherence possible.

Separate the pipeline into controllable stages. Instead of throwing prompts at a single tool and hoping, I broke the process into distinct phases, each with its own tool, purpose, and quality gate: Ideation → Character design → Consistency lock → Motion generation → Sound → Edit. Each handoff was a potential failure point. Mapping them was half the work.

Direct for stability, not novelty. I used repeated visual cues and controlled variations to reduce the model's room to "freestyle." The direction was restrictive by design. In filmmaking, consistency is the craft.

Character Design & Art Direction

Designed the protagonist in Midjourney, establishing the tattoo system, body language, colour world, and visual tone. Every subsequent tool in the pipeline was measured against this reference.

Consistency Development

Used Nano Banana and Seedream 4 to develop and lock the character across poses and contexts, the critical step that most AI workflows skip entirely. This is where coherence is built or lost.

Multi-Model Video Generation

Generated motion across six different models: Veo 3.1, Kling 2.5, LTX, Sora, Runway, and SeedanceWaver (open source). I deliberately tested how each handled consistency, motion quality, and style fidelity. The goal wasn't to pick a winner. It was to understand which tool excels at what, and how to orchestrate them.

Sound Design & Voice

Music sourced from Artlist (Yarin Primak, "Back to Base"). Sound effects produced through Artlist and ElevenLabs. Audio was treated as a directorial layer, not an afterthought. Pacing and emphasis were shaped to match the visual rhythm of the edit.

Editorial Assembly

Edited in iMovie, intentionally lightweight to keep the focus on AI output quality and workflow design rather than post-production rescue. The edit had to prove the pipeline, not compensate for it.

A fully AI-produced short film intro with consistent character detail, stable visual language, and coherent narrative flow. This proves the pipeline works when the workflow is designed with the same rigour as traditional production.

A practical, documented workflow model covering the full chain: character design, consistency development, multi-model generation strategy, audio production, and editorial logic. This includes a map of failure modes (what breaks first, where handoffs collapse, and why).

A core finding: consistency is workflow-dependent, not tool-dependent. The differentiator isn't access to the newest model; it's pipeline design, iteration discipline, and taste. The same tools that produce incoherent noise in one workflow can deliver controlled, directed results in another.

A strategic read on the moment. This feels like crypto in 2017: chaotic discovery, new tools daily, open-source versus centralisation tensions, and a rapid acceleration curve. The creative landscape is shifting not away from creativity, but toward creativity at scale. Ideas that lived on paper can now move into motion. The next great filmmakers may emerge from the most unexpected places.